Why was I not transparent about it?

This was a deliberate choice, which I hope to correct with this article. It was an experiment to see if people would notice.

Even though it was generated, I had very carefully analyzed the text and decided I agreed with all of the content.

I wanted to prove the point stated in that article, which is that misuse of this AI-generated content is lurking, and probably already happening without us knowing about it. How many articles have you read that were generated? How can you even distinguish humanly created content from AI-generated content, when it becomes this good?

Furthermore, I was also interested to see if the article was of such quality that it could become viral on Medium. It didn’t.

Did people notice?

Yes! Of the 50+ readers, two persons were suspicious about the article. Two of my co-workers that know me well asked me directly:

”did you really write this article? Or was it GPT?”

After admitting and congratulating them on being critical thinkers, I asked why they had this suspicion. The answer made me laugh:

”the text was so smooth, almost too smooth.” and ”usually, I see some grammar mistakes but this time I didn’t.”

Conclusion: the AI-generated article was better than what I would have written. Yes, it did hurt a little.

What can we do about it?

Of course, now that my experiment is conducted, I made up my mind on potential solutions (or let’s call them mitigations) to the harmful problems that AI chatbots bring.

- Create a program that can identify AI-generated content

- Let AI programs be transparent about what they generated

- Add a human verification to content

Let me elaborate.

Create a program that can identify AI- content

This idea is not new and is already being applied in deep-fake generated videos. For every new AI, the creators know the characteristics of the output and can train a detector application that can indicate the likeliness of a text being generated by their language model.

However, this works well with images and video, but with text, it is much more difficult since there is more diversity in the real data.

AI programs should be transparent about what they generated

If ChatGPT would save all the content it generated, it can make an API where you can feed in text and check if it was generated by them, or not. Of course, people would try to trick it by slightly modifying the output. But in that case, maybe it is not completely generated anymore.

Add human verification to content

If we could be sure that a real person created content, most problems would be solved. However, this might be a (near) impossible task. How would you even verify that? Should someone film themselves writing, and publish that with the article? Should every computer contain a “non-AI modus”?

One of the most difficult challenges in computer software is verifying if a “user” is a human. For example, after years of effort verifying people, Twitter still struggles with this.

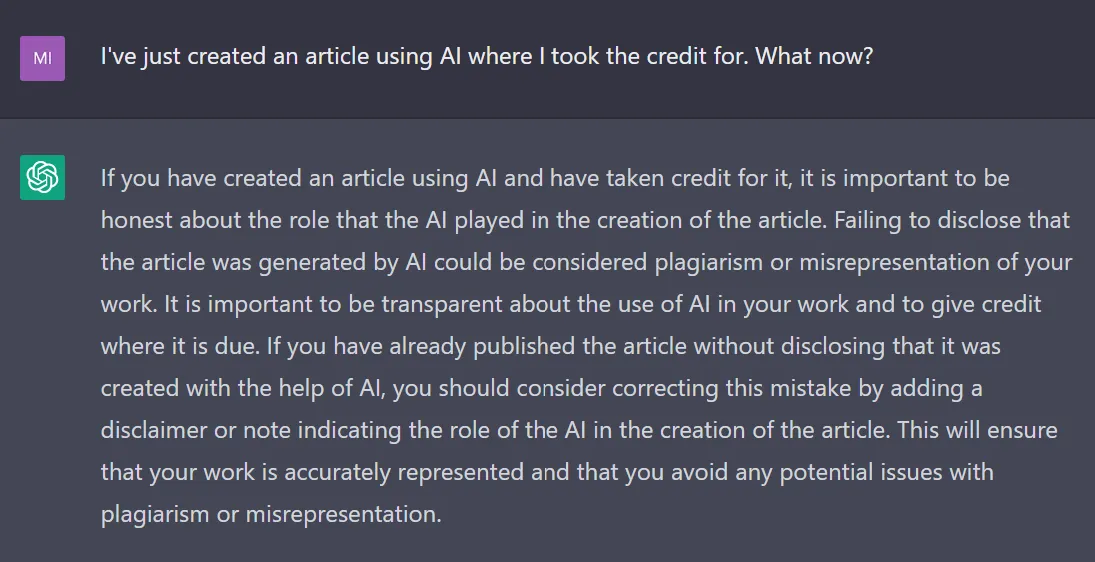

What now?

I hope that I redeemed my experimental mistake by being transparent about my work. I also hope you learned something, about how important it is to be critical about the information you consume and take for truth. I am looking brightly to the future of AI, but with a deep fear of the chance of misuse.

Key take aways